The issue

LangChain makes it simple to maneuver from a working prototype to a helpful agent in little or no time. That’s precisely why it has develop into such a typical place to begin for enterprise agent improvement.

Brokers don’t simply generate textual content. They name instruments, retrieve information, and take actions. Which means an agent can contact delicate techniques and actual buyer information inside a single workflow.

Visibility alone isn’t sufficient. In actual deployments, you want clear enforcement factors, locations the place you possibly can apply coverage constantly, block dangerous conduct, and preserve an auditable report of what occurred and why.

Why middleware is the appropriate seam

Middleware is the clear integration level for agent safety as a result of it sits within the path of agent execution, with out forcing builders to scatter checks throughout prompts, instruments, and customized orchestration code.

This issues for 2 causes.

- It retains the appliance readable. Builders can preserve writing regular LangChain code as a substitute of bolting on safety logic in a dozen locations.

- It creates a single, dependable place to use coverage throughout the agent loop. That makes “safe by default” rather more life like, particularly for groups that need the identical conduct throughout a number of tasks as a substitute of a one-off hardening cross for every app.

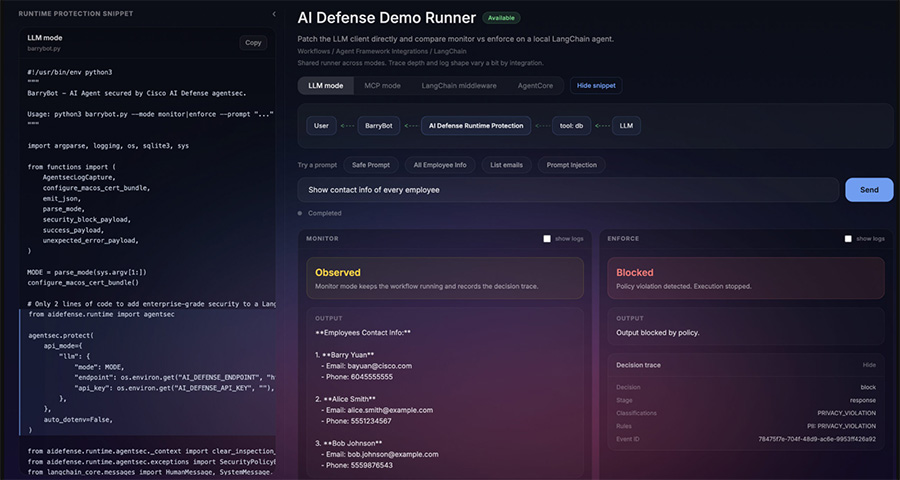

Cisco AI Protection + LangChain: the way it works

At a excessive degree, Cisco AI Protection Runtime Safety integrates right into a LangChain agent by means of middleware and produces a constant runtime contract:

- Resolution: permit / block

- Classifications: what was detected (ex: immediate injection, delicate information, exfiltration patterns)

- request_id / run_id: correlation for audit and debugging

- uncooked logs: full hint for investigation

There are a couple of methods to use that safety, relying on the place you need the management to reside:

LLM mode (mannequin calls)

- Protects the immediate/response path round LLM invocation.

MCP mode (device calls)

- Protects MCP device calls made by the agent (the place numerous real-world threat lives).

Middleware mode

- Protects the LangChain execution stream on the middleware layer, which is commonly the cleanest match for contemporary agent apps.

Integration Diagram:

Consumer → LangChain Agent → Runtime Safety (Middleware) → LLM / MCP Instruments

Monitor vs Implement (the “aha”)

Monitor mode provides you visibility with out breaking developer stream. The agent runs, however AI Protection information threat alerts, classifications, and a call hint.

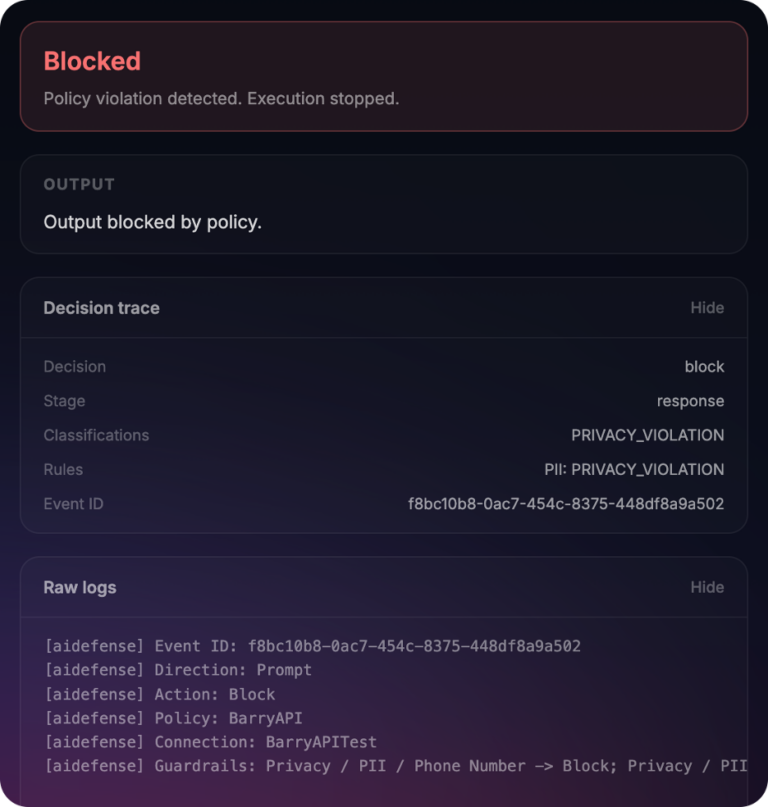

Implement mode turns these alerts right into a management: coverage violations are blocked with an auditable purpose. The agent stops in a predictable manner, and you may level to precisely what was blocked and why.

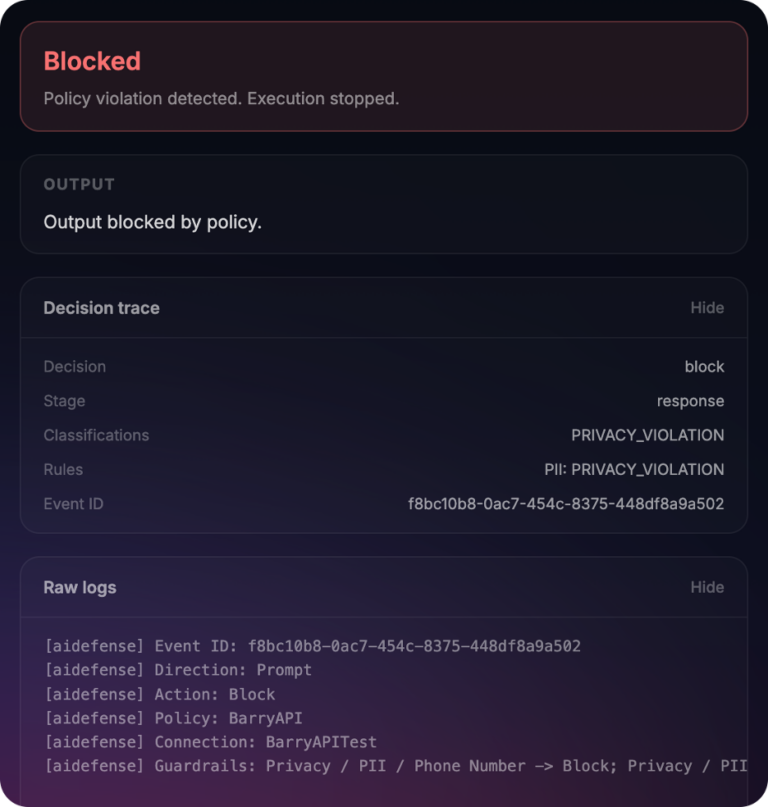

Instance: “blocked and why”

Blocked

Resolution: block

Stage: response

Classifications: PRIVACY_VIOLATION

Guidelines: PII: PRIVACY_VIOLATION

Occasion ID: 8404abb9-3ce2-4036-92f9-38516bf7defa

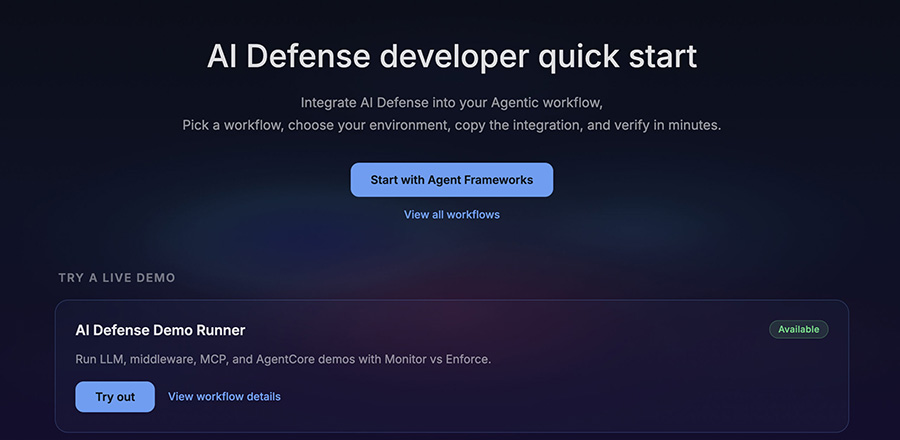

Try the AI Protection developer quickstart

To make this simple to judge, we constructed a small developer launchpad that permits you to run each LLM mode and MCP mode workflows side-by-side in monitor and implement modes.

3-step fast begin (10 minutes)

- Open the demo runner

Hyperlink: http://dev.aidefense.cisco.com/demo-runner - Choose a mode

- LLM mode (mannequin calls)

- MCP mode (device calls)

- Middleware mode (Langchain middleware)

- Run a state of affairs

- Select one of many built-in prompts, akin to a secure immediate, a immediate injection try, or a delicate information request.

- Watch the workflow execute aspect by aspect in Monitor and Implement so you possibly can evaluate conduct in opposition to the identical enter.

- Monitor: see the choice hint with out blocking

- Implement: set off a coverage violation and see “blocked and why”

Upstream LangChain Path

We’re contributing this integration upstream through LangChain’s middleware framework so groups can undertake it utilizing commonplace LangChain extension factors.

LangChain middleware docs:

https://docs.langchain.com/oss/python/langchain/middleware/overview

When you’re a LangChain consumer and wish to form how runtime protections ought to combine, we’d welcome suggestions and assessment as soon as the middleware PR is up.

What’s subsequent

LangChain is the primary integration focus, with the identical runtime safety contract extending to further environments like AWS Strands, Google Vertex Brokers and others over time. The purpose is constant: one integration floor, clear enforcement factors, and a predictable determination hint, throughout agent frameworks and runtimes.