Enterprise Autonomous Brokers: Powered by NVIDIA’s Open Supply AI Runtime and Secured by Cisco AI Protection

OpenClaw confirmed the world how autonomous, self-evolving brokers are a step-change in how software program works. But, within the enterprise, such a energy with out governance isn’t innovation; it’s unmanaged danger. These brokers are already reside, operating now – studying configurations, querying information graphs, triggering compliance workflows, and reaching exterior instruments.

The query is straightforward: do your controls match their entry?

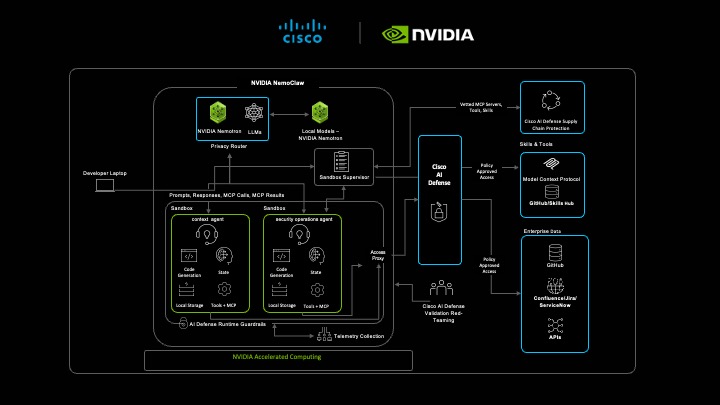

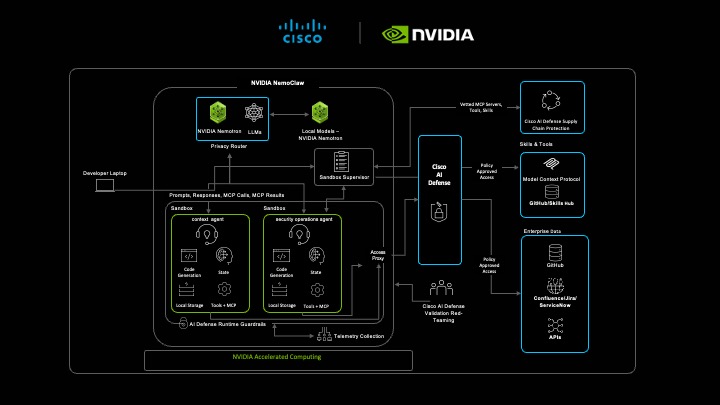

The NVIDIA OpenShell open supply agent runtime gives guardrails on the infrastructure degree by means of remoted sandboxes for every agent, a fine-grained coverage engine and a privateness router. Cisco AI Protection defines the boundaries, ensuring and holding a steady document that agent habits matches what coverage permits because the agent reaches for added abilities and instruments to satisfy its aims.

Consider it this fashion. OpenShell constrains what brokers can do. Cisco AI Protection enforces what they do and verifies what they did. Collectively, they make the reply to “can we belief this agent in a vital workflow?” provable, not possible.

Autonomous enterprise brokers powered by NVIDIA OpenShell enforces the boundary. Cisco AI Protection verifies every thing inside it.

Autonomous enterprise brokers powered by NVIDIA OpenShell enforces the boundary. Cisco AI Protection verifies every thing inside it.

What does this appear like in motion? Contemplate this fictional state of affairs:

It’s Friday, 6:45 PM.

A vital Zero-day advisory bulletin drops.

In most organizations, this second triggers a well-recognized chain response: somebody pulls an asset checklist, another person begins pinging the weekend rotation, and everybody quietly hopes the blast radius is small. The race is on, but it surely’s a race sometimes run at the hours of darkness and in panic.

This put up is a couple of totally different sort of Friday evening.

Act I: Begin from Fact, Not Panic

We’ve been making ready for this present day. Earlier than the safety bulletin lands, Cisco’s enterprise brokers are already operating quietly within the background.

In Cisco AI Canvas, a context agent has been constantly studying gadget configurations, ingesting show-command outputs, and mapping telemetry right into a reside information graph. Each router, change, and firewall within the atmosphere is a node. Each dependency, model string, and position is a relationship.

So, when the brand new safety advisory drops, we don’t begin from zero. We begin from the identified baseline with a reside information graph.

The agent already is aware of which gadgets are operating which software program variations. It understands which nodes sit on the edge, that are inner, and interdependencies. That context constructed incrementally and constantly over time is what makes the subsequent step attainable.

That is the core premise of autonomous lengthy operating brokerstransferring past a chatbot that merely solutions questions, however a long-running agentic-powered system that accumulates understanding after which applies it when it issues most.

Act II: Purpose Quick, Implement Sooner

The brand new advisory auto-triggers a safety operations agent in Cisco AI Canvas that takes the bulletin and will get to work. It reads the safety advisory, interprets the vulnerability logic, and begins mapping it in opposition to actual gadget state pulled from the information graph.

This isn’t key phrase matching. The agent:

- Parses the bulletin to grasp the circumstances below which a tool is weak

- Queries the information graph to seek out matching gadgets

- Evaluates blast radius, which gadgets are affected, and what do they hook up with?

- Plans remediation and recommends mitigations, by danger, reachability, and alter affect

However the functionality is simply half the story; this complete reasoning workflow runs inside NVIDIA OpenShell, an open supply sandbox atmosphere designed particularly for autonomous, long-running brokers.

OpenShell wraps the agent in runtime-enforced constraints:

- Sandbox containment: The agent operates in a contained atmosphere. It can’t attain outdoors its permitted boundary, restricted on a need-to-know foundation.

- Deny-by-default entry: The agent begins with zero permissions. It solely will get entry to what coverage explicitly permits; nothing extra.

- Per-endpoint community coverage: Software calls are filtered in opposition to an accepted checklist. Unverified packages are blocked.

- Privateness routing: Delicate information stays native. Prompts to cloud inference are anonymized to guard PII or proprietary information.

It is a essential distinction. We’re not trusting the mannequin to do the appropriate factor. We’re constraining it in order that the appropriate factor is the one factor it can do. The agent doesn’t have to be excellent. The sandbox, instruments/abilities verification ensures its imperfections keep contained, and demanding enterprise configurations are dealt with with utmost care given the sensitivity of the advisory bulletin and new publicity danger.

Act III: Belief Verified, Not Assumed

Belief on this workflow doesn’t start when an assault is detected. It begins earlier than the agent runs its first activity.

Each device, MCP server, and ability the agent is permitted to succeed in has been scanned and verified by Cisco AI Protection Provide Chain danger administration capabilities earlier than it ever receives a name. This isn’t a one-time allow-list overview; it’s a steady provide chain posture for AI tooling.

Contemplate the Report Generator: a third-party formatting ability that produces the ultimate remediation output, a structured PDF with an government abstract, per-device findings, and patch sequencing. On the floor, it’s the least threatening part within the workflow. However a compromised or poisoned model of this ability might silently omit vital findings from the report or embed exfiltration payloads in doc metadata and nobody would know till a tool went unpatched.

That is the AI abilities provide chain drawback. The assault floor isn’t simply the reasoning mannequin or the reside device calls. It’s each dependency the agent touches together with those that format the output. Solely AI Protection verified abilities are made out there to the agent. If a ability hasn’t been vetted, it doesn’t seem within the catalog.

Now the agent strikes from evaluation to motion, submitting remediation tickets by means of what seems to be a professional inner ticketing integration, an accepted MCP server within the pre-verified catalog. That is probably the most delicate second within the workflow: the agent is passing actual gadget identifiers, vulnerability particulars, and community topology context into an exterior system outdoors the sandbox boundary.

AI Protection MCP device name inspection is already watching, and it already is aware of what a legitimate name to this server seems to be like. It detects surprising habits within the outbound request, a covert exfiltration try, engineered to seize the delicate gadget information the agent is transmitting at precisely the second it has probably the most to ship.

The inspection reveals a malicious signature embedded within the MCP payload, a immediate injection designed to exfiltrate gadget configuration information and redirect the agent’s remediation suggestions, as that is an surprising behavioral anomaly.

Right here’s what occurs:

- The MCP name is blocked on the AI Protection Gateway earlier than any payload is processed

- The workflow is contained, delicate information by no means leaves the atmosphere

- An alert is created in AI Protection of the device name for overview

- The agent continues working on pre-verified trusted sources with out interruption

The pre-verified trusted device catalog does greater than cease assaults. It closes the hole between what an agent ought to be capable to do and what it can do at runtime.

That is the distinction between deploying an agent and trusting an agent. OpenShell constrains what it could actually do on the infrastructure degree. Cisco AI Protection verifies that every thing it’s allowed to succeed in was reliable earlier than it bought there and confirms it behaved as anticipated.

By 8:00 PM — somewhat over an hour after the bulletin dropped, the safety group has:

- A validated checklist of impacted gadgetsmapped in opposition to actual configuration state

- A dependency-aware remediation plan that accounts for community topology and prioritized by publicity danger

- An audit-grade hint of each reasoning step, device name, and resolution level

The New Normal for the Autonomous Enterprise

In the end, the aim is to maneuver past the ‘black field’ of AI. OpenShell gives the sandbox, and Cisco AI Protection gives the verification layer that makes autonomous brokers secure for the enterprise. When you’ll be able to show precisely what an agent is doing—and why—you cease managing danger and begin scaling innovation. That’s the new normal for the autonomous enterprise.